News

Another data breach has affected countless unsuspecting people due to lax security measures. Billions of personal records may soon be leaked online…

Creating and growing a successful law firm amidst an ever-evolving landscape demands drive, innovation, and adaptability—a combination of values that…

In the aftermath of a life-altering car crash, Ben found himself faced with uncertainty and pain.

Recalling the moment of impact, Ben…

Verdict: $20.8M Auto Case

It was early morning on Nov. 15, 2019, when Sheriff’s Deputy Nicholas Orrizzi left home for work in Florida’s Seminole…

The following product recalls have been announced this week—make sure you’re in the know.

Home Design Upholstered Low-profile Standard and…

The following product recalls have been announced this week—make sure you’re in the know.

Retrospec Scout Kid’s Bike Helmets

Retrospec is…

A business’s cybersecurity provider could actually be its weakest point of vulnerability.

If you or your business is relying on a Managed Security…

Subscribe to our newsletter

Get the latest updates on new cases, important news, and tips for keeping you and your family safe and healthy.

Accidents & Incidents

View AllFMLA Leave: Your Rights, Legal Protections, and How Morgan & Morgan Can Help

Balancing work and personal life can be challenging, especially when faced with a serious health condition or the need to care for a loved one. The…

Illegally Parked Car Accidents: Who’s Liable and What You Need to Know

Accidents involving illegally parked cars are more common than many people realize. Whether it’s a vehicle blocking a fire hydrant, parked too close…

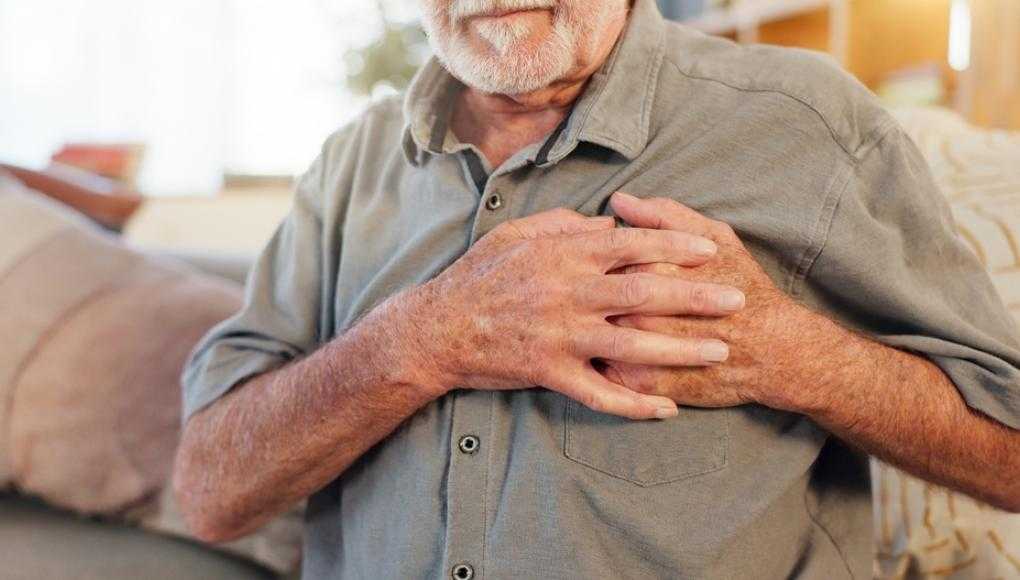

What to Do if Your Chest Hurts After a Car Accident

A car accident can leave you shaken, disoriented, and in pain, and one of the most alarming symptoms you might experience after a crash is chest pain…

Injured in a Hit and Run Accident? Here Are Your Legal Options

Hit and run accidents are deeply upsetting. If you have experienced one you understand how the injuries and property damage can be confusing,…

Injury Prevention

View AllScrolling Smart: Morgan & Morgan's Guide to Social Media Safety

Like, share, repost, tagging, and follow are all fairly common words used when using social media. Hacking, leaks, stalking, and mental health are…

How to Protect Yourself From Tax Refund Scams

If you haven’t filed your taxes yet, you should do them as soon as you can — before someone else does.

With July 15 on the horizon, the Internal…

Insurance

View AllExploring Homeowners Insurance Costs in Atlanta: What You Need to Know

Navigating homeowners insurance in Atlanta can seem daunting with rates significantly higher than both the state and national averages. Understanding…

How to Appeal a Car Insurance Decision in Miami

Understanding Car Insurance Claim Denials

When your car insurance claim is denied in Miami, it can feel like a major setback, but it's important…

Understanding Personal Injury Protection (PIP)

Personal Injury Protection (PIP), also known as no-fault insurance, is designed to cover expenses such as medical bills, lost wages, and funeral…

How Can Vandalism Impact My Business Interruption and Insurance Claims

Across the country, peaceful protests have called for an end to police brutality. However, some rioters have taken advantage of these peaceful…

Newsroom

View AllBe Their Hero: Safe Driving Starts With You

Be their hero behind the wheel. Hear John Morgan’s powerful message on safe driving—click here to watch. Kids are impressionable—it’s a fact.…

The Week in Recalls: Week of March 21, 2025

The following product recalls have been announced this week—make sure you’re in the know. 2020–2021 Cadillac CT4, 2020–2021 Cadillac CT5, 2019–…

The Week in Recalls: Week of March 7, 2025

The following product recalls have been announced this week—make sure you’re in the know. Babyjoy High ChairsCostway has recalled the…

The Week in Recalls: Week of February 28, 2025

The following product recalls have been announced this week—make sure you’re in the know. Tesla 2023 Model 3 and Model Y VehiclesTesla is …

The Case Process

View All3 Reasons Why You Need America’s Largest Injury Law Firm to Take on a Big Company

When you’re facing a legal battle against a major corporation, the stakes are high. Whether it’s a personal injury case, a workplace dispute, or a…

Hiring Your First Lawyer: A Guide for First-Time Legal Representation

When life throws unexpected challenges your way, whether it’s a car accident, a workplace dispute, or a denied insurance claim, you may find yourself…

How Social Media Can Affect Your Personal Injury Lawsuit

Most of us are constantly connected through social media. Whether it’s Facebook, Instagram, TikTok, X (formerly Twitter), or LinkedIn, we share every…

Average Value of a Bodily Injury Claim

Suffering a bodily injury can keep you out of work for days, weeks, or even months on end. Without a steady income, you quickly fall behind on paying…