Morgan & Morgan Sues Tesla Over Allegedly Unreliable Autopilot System

Injured?

Morgan & Morgan filed a personal injury and consumer protection lawsuit against automaker Tesla today, alleging that the company has duped consumers into believing its autopilot add-on feature can safely transport passengers at high speeds with minimal input and oversight from those passengers.

In the lawsuit filed in Florida state court, attorneys Mike Morgan, Steven E. Nauman, and Branden Weber allege that our client, Shawn Hudson, suffered injury when the autopilot feature of his 2017 Tesla Model S failed to detect the presence of a disabled Ford Fiesta on the roadway, causing the Model S to crash into the vehicle at about 80 mph. Mr. Hudson suffered permanent injuries as a result of the crash.

“If this crash had happened a little differently, it would have killed him,” Morgan & Morgan attorney Matt Morgan said at a press conference in which he was joined by Mike Morgan and Mr. Hudson.

Attorney Mike Morgan added that “Mr. Hudson became the guinea pig for Tesla to experiment with their fully autonomous vehicle.”

"If this crash had happened a little differently, it would have killed him."

Despite Tesla’s claim that its autopilot system is designed for use at highway speeds, the autopilot system is unable to reliably detect stationary objects, such as disabled vehicles or other foreseeable roadway hazards, posing an inordinately high risk of high-speed collisions, severe injury, and death both to Tesla’s passengers and to the driving public, the lawsuit alleges.

Furthermore, our suit alleges, Tesla sells this feature for an additional cost despite knowing it doesn’t live up to its autopilot claims. Mike Morgan noted that even though the autopilot feature is sold as a self-driving solution, deep in the car manual it says that over 50 mph the autopilot feature has trouble detecting stationary objects. Given that Tesla doesn’t recommend using it in the city, too, it basically means that Tesla is “selling nothing,” Mike Morgan says.

Mr. Hudson relied on those claims when using the feature in his Model S, and in doing so suffered severe personal injuries when his car struck the disabled Ford on the highway.

“It was a very hard impact. I can still feel that impact. It’s like hitting a wall,” Mr. Hudson said at the press conference.

Is 'Autopilot' a Deceptive Name?

Last year, Mr. Hudson was in the market for a new car. He works about 125 miles away from his home and each leg of his commute is two hours, primarily on Florida’s Turnpike (Florida State Road 91). With that in mind, Mr. Hudson earnestly sought a vehicle that could help alleviate the grueling and time-consuming nature of his commute.

During his search, Mr. Hudson saw numerous Tesla ads promoting its vehicles’ ability to drive long distances on the highway with minimal input or oversight from the driver. Encouraged by these ads, Mr. Hudson went to a Tesla dealership to get more information. A salesperson confirmed the ads’ claims, telling Mr. Hudson he could purchase the autopilot add-on and it would allow the car to essentially drive itself, according to the lawsuit.

“Tesla’s sales representative advised [Mr.] Hudson on the numerous safety features and benefits of driving a Tesla vehicle equipped with autopilot, including the vehicle’s ability to brake by itself and automatically avoid roadway hazards so as to prevent a collision,” the complaint alleges. Furthermore, the lawsuit says that the salesperson told Mr. Hudson that if a Tesla vehicle did detect a hazard the autopilot system will also alert passengers so they can take control of the vehicle if necessary.

Tesla’s sales representative reassured Hudson that all he needed to do as the “driver” of the vehicle is to occasionally place his hand on the steering wheel and that the vehicle would “do everything else,” the lawsuit says.

Mr. Hudson and the salesperson took a test drive of a Model S. The salesperson demonstrated the autopilot feature and it worked as advertised, according to the complaint. On the strength of that, Mr. Hudson requested a loaner vehicle he could drive for a couple days to try it out on his own. After a weekend with it, he was sold and bought a 2017 Model S with the $5,000 autopilot upgrade.

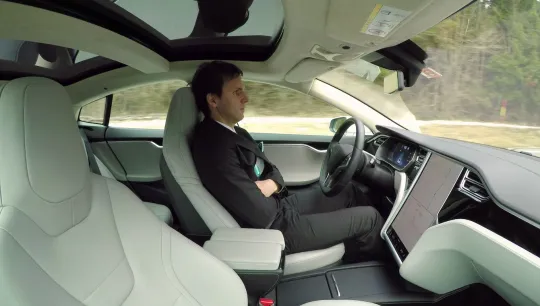

Tesla Model S Autopilot Injury

Over the next year, Mr. Hudson drove his Model S almost 100,000 miles, primarily on Florida’s Turnpike. The autopilot feature allowed him to relax more on his commute, and the more he drove the more he trusted Tesla’s autopilot system. But on the morning of Oct. 12, 2018, that all changed. In fact, his entire life changed.

He was traveling southbound at about 80 mph in the left lane with autopilot engaged. He was fully confident his car would “do everything else,” just as Tesla promised. Little did he know that his Model S was approaching an abandoned Ford Fiesta that had stalled out in the left lane.

Without warning, the Model S slammed into the disabled vehicle, completing destroying the front of the car and leaving Mr. Hudson with severe permanent injuries.

The autopilot feature failed to detect the disabled vehicle that had been negligently left on the roadway, despite Tesla’s numerous claims that its autopilot would detect and avoid such collisions. Mr. Hudson wouldn’t have purchased the autopilot upgrade if he knew it wouldn’t perform as advertised.

“... Tesla knows that its autopilot system does not work as promised and cannot reliably detect stationary objects located in the roadway, including disabled vehicles and other foreseeable hazards,” the lawsuit says. “Notwithstanding, Tesla continues to tout its autopilot system as a technologically advanced safety feature that allows Tesla vehicles to safely transport passengers at highway speeds from one point to another, with the capability of avoiding common vehicular hazards.”

The lawsuit seeks to recover compensation for the injuries and damages Mr. Hudson suffered as a result of the autopilot’s failure and the negligent disposal of the disabled vehicle on the highway.

(Feature photo by Flystock/Shutterstock.com is not an actual depiction of our client or his car.)

We've got your back

Injured?

Not sure what to do next?

We'll guide you through everything you need to know.